Society suspended between a no longer and a not yet

The relationship between technology and society resembles the relationship in a couple: one influences the other and vice versa, but in order for this to happen there must be a party of two. With this interpretation key, through the examination of case studies that embody the most pressing discussion nodes around innovation, digital media sociologist Davide Bennato tells how our society is rapidly changing and what risks and opportunities can be expected

Exactly thirty years ago, in the spring of 1994, research published in the scientific journal Computer Human Interaction by researchers Clifford Nass, Jonathan Steuer and Ellen R. Tauber of Stanford University’s Department of Communication enshrined a new experimental paradigm for the study of human-computer interaction, demonstrating that individuals’ interactions with computers are fundamentally social because we use, unconsciously and automatically, social processes when interacting with a technology that proves to be sufficiently authoritative, autonomous or sentient. For example, in research conducted in Human-Robot Interaction, we see that robots are perceived to be similar to humans and that humans interact with them on a social level. Today, as the available intelligent technologies progress, we can say that this theory is not strictly limited to computers, but extends to other technologies, such as robots and, of course, artificial intelligence. In particular, now that generative AI is beginning to pervasively enter our daily lives, with the use of certain specialized apps that manage certain information flows, these apps will gradually turn from technologies into “social actors.”

We asked Davide Bennato (Sociologist of Digital Media, University of Catania) to explain what it means and what implications the entry of AI among the social actors of the 21st century entails.

“We are used to thinking of AI as a set of technologies that perform a specific task based on a human exchange or a specific request from a person or another technology. What is happening, however, is that these AI systems are producing a kind of informational response, based on the specific request, because of a kind of decision autonomy. This causes these technologies to be less and less static and increasingly interactive and, as such, to have the property of being social subjects, i.e., real agents in the digital space; space that is, however, also social, and in which therefore these tools behave as real “social actors” (as people, groups and organizations are) that carry out precise activities over which we humans do not have complete control.”

A relevant implication is the use of specific technologies that fuel the disinformation market and, in so doing, undermine democracies.

“NewsGuard (an Internet service that specializes in verifying the reliability of news sources through a team of experienced journalists) and Comscore (a company that specializes in providing data for digital marketing) have released a quite disturbing report on what we might call the fake news industry. The two companies colla¬borated by cross-referencing their databases, a sample of 7,500 sites whose traffic is monitored by Comscore and 6,500 news sites analyzed by NewsGuard from the standpoint of trustworthiness and credibility. The purpose was to obtain an estimate of ad spending that affects sites that are unreliable or otherwise responsible for spreading fake news. The findings cast a rather disturbing shadow over programmatic advertising (automated advertising based on algorithms profiling Internet surfers). Studies are multiplying, and all of them converge on the same results: misinformation is a real toxic communication ecosystem, which makes money by exploiting the engaging capabilities that fake news has in confirming people’s biases. Indeed, the data collected make it quite clear what the elements of the information industry are.

- First, the opacity of algorithms. All the research shows how the algorithms behind the platforms are very good at achieving their goals (profiling the advertising target, suggesting content) but often at the expense of fair use. These design bugs emerge only later on, but once you have built a market on them then it is very expensive and therefore difficult, to reverse or redesign them.

- Second, the effect of the filter bubble. By now it is well known that the strategy of segmenting content on the basis of audience interests, locks them inside a bubble that prevents the entry of different content and alters the perception of the surrounding public opinion. This creates a reinforcing mechanism – the famous resonance chambers – that acts by radicalizing the positions of people. This socio-computational bias mechanism produces a social and cognitive environment that has distorting effects on the civil coexistence that is at the basis of liberal democracies.

- Third, the exploitation of perceptual biases in information usage.”

In your latest book you say that while the 20th century is slow to wane, the 21st century is eager to rise, what do you mean by that?

“On the one hand, the social structures – such as the individual, family, work, media, culture, politics, and economy structures that inhabit these early decades of the 2000s – are still a legacy of the century just past, and their persistence is concrete testimony to their historical success. But this persistence does not mean that they are still the social structures we need, only that society (synecdoche for politics) cannot imagine alternative social structures.

On the other hand, digital technologies have shifted the axis of social power from institutionally organized elites to technologically organized majorities, from the politics of parliaments to the politics of the web. We have been talking since the 1990s about the process of disembedding of traditional institutions and re-embedding through new social institutions, and digital society has reinforced this.”

Through a collection of case studies examined under the lens of Latour’s Actor Network Theory, he showed more concretely what he means by the intertwining of people, data and technologies, and how it is possible to glimpse the profound change that is shaping the socio-technical nature of the 21st century.

- The metaverse is defined as a technological dystopia in the service of a mercatist ideology.

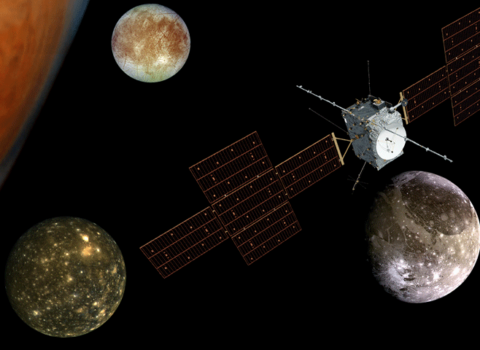

- The case of “Kazakhstan and bitcoin” describes the unchecked autonomy of cryptocurrencies and the destabilizing consequences in terms of environmental, economic and social sustainability.

- Operation Ironside focuses on the role of technologies to support law enforcement in the fight against international crime.

- The socio-economy of disinformation is presented as structuring a toxic productive and cultural ecosystem that compresses the spaces of democratic debate.

- The use of TikTok in the war in Ukraine shows how livestreaming has been used as a source of information from the front.

- Spontaneous activism on social media following the 2015 Paris attacks testifies to collective humanity as an emerging phenomenon.

- The alarm raised by Hollywood, in tones of the French Revolution, about artificial intelligence, signals the growing precariousness in the industry and the rise of bot scriptwriters, synthetic actors, and deepfakes.

In all these emblematic examples, what makes the difference is the critical spirit, i.e., our ability to recognize “the traces of post-reality between human and non-human actors,” starting from the realization that, for better or worse, “those who guarantee the rules of verifiability are the people who recognize themselves in those rules: i.e., the community is the institution that guarantees the rules of verifiability.”

____________________________________________________________________________

Davide Bennato focuses on digital sociology, online content consumption and collective behavior in digital platforms. He is the author of the blog Tecnoetica.it and contributes to Agendadigitale.eu. Among his publications, Dizionario mediologico della guerra in Ucraina (edited, with M. Farci and G. Fiorentino, Guerini 2023), La società del XXI secolo. Persone, dati, tecnologie (Laterza 2024).