Public policy evaluation and spending review: notes from the field

At the core of this effort is the MEF’s initiative to strengthen the use of empirical evidence in spending decisions.

Luigi Einaudi’s famous quote, “to know in order to decide,” served as the guiding thread of the seminar organized by FBK-IRVAPP, exploring the relationship between public policy evaluation and public expenditure decisions. The event provided an opportunity to discuss an initiative launched in recent years within the State General Accounting Department, with the aim of strengthening the capacity of central administrations to produce and use empirical evidence on the implementation and effects of public policies to make informed policy decisions.

Departing from the more traditional approach of understanding a spending review as a means to simply cut expenditure, the initiative presented by Sisti sets out not just to spend less, but to spend better — to understand which policies work, for whom, under what circumstances, and with what scope for improvement.

From spending review to policy evaluation

The initiative emerged in the context of reforms set out under the PNRR (Italy’s National Recovery and Resilience Plan), particularly with regard to strengthening the functions of spending review and public policy evaluation. One distinguishing feature compared to any previous efforts is the unprecedented availability of dedicated resources: the budget law in fact made provision for hiring specialist staff, engaging external experts, and delivering training activities.

This aspect is crucial. Evaluation is not built on good intentions or regulatory references alone — it requires expertise, time, data, organisational capacity, and a sufficiently robust institutional demand. In this spirit, three-year Spending Analysis and Evaluation Plans have been introduced: instruments through which ministries are expected to identify areas for intervention, evaluation questions, available data, analytical methods, and timelines for their delivery.

The first challenge, however, is even more basic: helping administrations recognise public policies as a proper object of their work. In everyday administrative practice, the most visible objects tend to be budget lines, spending authorisations, regulations, procedures, and compliance requirements. Public policy — understood as a set of actions aimed at addressing a collective problem — is a less immediately tangible analytical object. It exists, but it is not always easy to see.

Asking the right questions

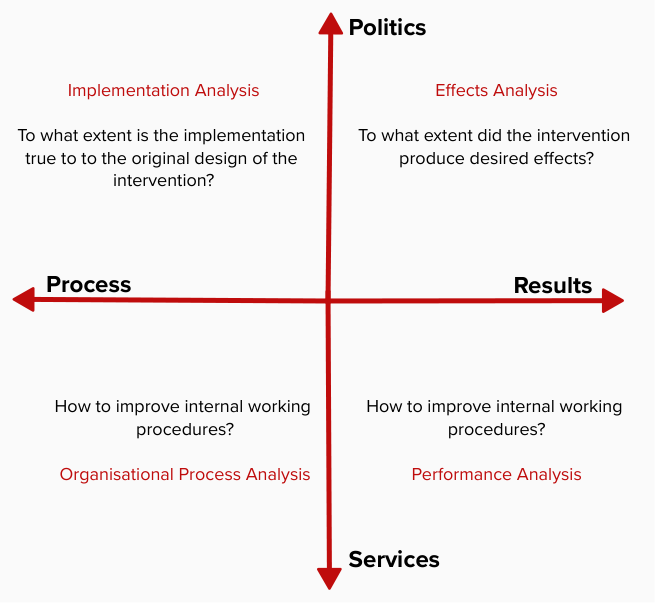

Another key aspect concerns the framing of evaluation questions. Not all questions are equal, and not all questions require the same methods. The seminar proposed a useful distinction between policies aimed at bringing about change and services to be delivered efficiently, and between process analysis and results analysis.

From this framework, several groups of questions emerge: Has a policy produced the desired change? Was the implementation faithful to the original design? Is the service being delivered efficiently? Which organisational processes hinder or support the achievement of objectives?

This classification, apparently simple, is in fact decisive. It allows often vague needs to be transformed into empirically tractable questions. And it is precisely at this stage that evaluation can become a genuinely useful tool for public administration — not an abstract exercise, but a means of clarifying problems, reconstructing mechanisms, and producing information that is relevant to decision-making.

The seminar also highlighted the importance of making better use of administrative data as a source for evaluation. One example discussed during the seminar was the evaluation of the PRIN — National Projects of Significant Interest funded by the Ministry of University and Research — carried out with the contribution of the Bank of Italy.

The challenge of using evidence

Evaluation does not end with the production of a report. One of the central questions to emerge from the seminar concerns what might be called the “last mile”: how to ensure that the evidence produced actually influences policy decisions.

In this regard, the experience described seeks to connect the recommendations contained in evaluation reports to concrete reform options — ideally in time to feed into budget allocation processes. This is a delicate step, as it requires not only technical quality but also institutional dialogue, trust, and the ability to translate analytical findings into workable administrative and political choices.

Open questions

The seminar also shed light on the difficulties of this journey. The availability of administrative data is never guaranteed; collaboration from ministries often depends on the outlook of individual managers and officials; evaluation can be perceived as a tool for auditing institutional performance — or even as a signal of potential budget cuts — rather than as an opportunity to learn and improve.

For this reason, alongside formal instruments, working on a shift in mindset matters enormously: training, support, trust-building, and the creation of alliances between public administrations, research institutions, and academia are key. Public policy evaluation requires rigorous methods, but also institutions capable of embracing uncertainty, questioning their own actions, and using evidence to do better.

The journey has only just begun. But the direction is clear: to bring evaluation out of the realm of rhetoric and into the everyday practice of everyday public decision-making — making decisions better informed, more transparent, and result-oriented.