A conversation with Dr. Lennart E. Nacke on AI Augmentation In Games

How can the user experience of video games stimulate engagement and change behavior, particularly using artificial intelligence? Prof. Nacke, a pioneer in the field of games, gamification, and user experience, held a seminar on this topic at FBK on December 9, 2025.

Artificial intelligence (AI) is redefining the landscape of almost every industry, and user research on game is no exception, where a multifaceted understanding of the player experience is crucial in everyday practice.

Generative AI and machine learning offer the promise of unprecedented efficiency, reducing costs and time. However, the integration of these tools poses a crucial challenge for researchers: how can we leverage the power of AI while maintaining methodological rigor and research quality?

As a guest of Fondazione Bruno Kessler, Prof. Nacke addressed this issue in the seminar “AI Augmentation In Games User Research: How To Maintain Research Quality Using AI,” held on December 9 at FBK’s Povo headquarters.

The Future of Game User Research

The Future of Game User Research

Recognized as one of the top 10 scholars in Human Computer Interaction over the last decade, Prof. Nacke described a decision-making framework for integrating AI ethically in three critical research areas: identifying appropriate contexts for automation, validating AI-generated tools, and preserving essential human judgment.

1. Challenges and opportunities with AI efficiency

AI acts as an enabling tool, capable of transforming research results into practical and scalable applications. In the context of digital content production and analysis, AI is already capable of supporting human creativity by providing starting points for development (as in transmedia screenplays). In gaming, too, AI is transforming game design, enabling the generation of interactive worlds that evolve in real time based on player behavior. However, while promising the “democratization of technical quality,” the implementation of AI carries the risk of reducing the research process to “mechanical data processing,” losing sight of a deep understanding of player insights.

2. The three pillars for maintaining research quality

The framework proposed by Nacke focuses on three critical dimensions to ensure that AI-augmented user research maintains high standards of quality:

A. Identify appropriate automation contexts

AI cannot and should not replace human judgment at every stage. It is essential to understand where automation fails to capture the insights of players. Addressing the challenges of AI requires scientific rigor, which is achieved by preserving human skills—such as culture, imagination, empathy, critical thinking, and visualization—that machines cannot replicate.

B. Validate AI-generated tools and control quality

The use of AI-generated tools, from survey design to interview analysis, requires rigorous validation. Maintaining the quality of research and innovation is a key objective. The need for qualitative-quantitative methodologies and metrics is a recurring theme. In other research contexts, evaluation processes that rely heavily on quantitative data have been criticized for their inadequacy in capturing the complex nature of experience. Therefore, it is crucial to implement quality controls that go beyond simple mechanical measurement to ensure that automation does not compromise the validity of results.

C. Preserving human judgment and transparency

The most critical dimension of the framework is safeguarding the nuanced interpretation and creative intuition that are the hallmarks of effective research. This requires a strong commitment to ethics, integrity, and transparency. Working in sectors that make extensive use of information technology and AI, credibility and personal data protection are fundamental, in compliance with ethical and regulatory standards such as the GDPR and the AI Act. Researchers must also adopt transparency protocols to document the use of AI.

I’m delighted to introduce Joseph Tu, visiting scientist @ FBK Digital Society Center (MODIS Unit), an HCI researcher whose work focuses on how physiological signals can shape more adaptive, immersive, and accessible hybrid tabletop games. Drawing on expertise in UX design and physiological computing, he explores how to make play more inclusive, personal, and meaningful.

I’m delighted to introduce Joseph Tu, visiting scientist @ FBK Digital Society Center (MODIS Unit), an HCI researcher whose work focuses on how physiological signals can shape more adaptive, immersive, and accessible hybrid tabletop games. Drawing on expertise in UX design and physiological computing, he explores how to make play more inclusive, personal, and meaningful.

Joseph Tu: With AI enabling rapid content generation and procedural worlds, how should user research methodologies evolve to study experiences that are no longer static but infinitely adaptive?

Lennart Nacke: There are several ways that I can see this happening. For example, methodological adaptation and rigour would have to be brought to adaptive longitudinal studies. New methodologies here should be focusing more on continuous longitudinal tracking to understand how player experiences in an adaptive environment are changing over time, for which we need tools that track player actions consistently. For researchers, that means they must establish domain-specific validation frameworks to make sure there is quality control that detects when automated tracking tools fail to capture player insights. You would need performance thresholds and explicit testing of all AI-enhanced research instruments against those developed by experts. A great workflow here would combine the efficiency of AI tools with the interpretive depth and strength of human insights. So for AI, it would be used for high-throughput descriptive tasks—things like doing the first-pass coding or summarizing the statistics— but with a mandate for human review so that humans can always add context-dependent themes, nuances, or interpretations.

A large part of this evolution is also that researchers actively maintain their ability to pass critical judgment, which is something they can achieve by manually coding core data samples first or doing calibrations within their team. So AI is always a co-coder, but the teams keep an AI failure log so that they learn where the weaknesses of the model are that they’re using to help them co-code and interpret a data set.

And of course, as I mentioned in my talk, we need to be transparent in documenting how we use AI in the process so that we can create trust in the research that we’re conducting with AI, which of course includes that we disclose exactly what AI tools we use and where we use it, and that we keep an audit trail with the version logs so that we can go back and find any biases or anomalies in our process. Ideally, we want to have systems in place to check if there is any bias introduced by the use of AI tools in our process, and that we are also able to quickly flag any weird or illogical patterns that the AI might find and that humans then have to investigate and correct.

JT: Where do you see AI currently failing most dramatically in capturing nuanced player experiences?

LN: That’s a very interesting question. I think, based on the studies that I talked about, we can currently say that a lot of times AI use does miss some context-dependent and interpretive themes in qualitative data, and that it’s not very good to get that depth of insight in the same way humans do. It also has trouble with cultural subtleties and the meaning of any nonverbal cues. Things like pauses or the cadence of the words spoken. Here a human might add a lot more interpretation than the current AI models are capable of, because they are just using the textual patterns to generate insights. I do think research generally is against using AI in high-stakes research situations that involve trauma patients or complex power dynamics where AI might simply not be suitable (for many reasons). Based on the research that I’ve read, I think AI tends to oversimplify subjective emotional expressions and keep the insights rather shallow. So, there is room for improvement here to capture the entire gamut of the human experience in the way that researchers can when they’re having an interview with a person or when they’re observing an actual gameplay session.

JT: How can researchers detect subtle biases introduced by language models or automated analysis tools when studying diverse player communities?

LN: I think in the current discourse around AI augmentation and games user research we can detect these biases that come from automated analysis tools through implementing systematic validation processes and requiring mandatory human oversight based on the outputs. As I mentioned, the key methods here are having human review in place where the researchers must implement review for areas where AI is suspected to have misinterpreted some data and to watch out for any anomalies and biases that AI models may introduce in the data.

Researchers always have to check if the AI is possibly being unfair to a group of people or a culture or a type of game. We always must be able to flag and investigate any patterns we see in the data and need tools to assist us in doing so. To establish a baseline calibration here for human AI co-coding I think it’s important that the codes or any patterns that the AI generates are always compared against the ones generated by humans. Of course, this entire process has to be documented so that people can follow it or the new processes that are being established here with AI. Any sort of documentation or logging of the entire research process and where AI is being introduced in that process will help us counteract any biases that might have been introduced into the interpretation of the data.

JT: What skills do you believe future game user researchers (or future related careers) will need?

LN: I think the user researchers of the future really have to focus on more of a higher-level senior researcher position where they interpret and strategically integrate AI into their workflows. They need to be able to audit and validate AI automations and AI agentic systems. This of course includes that they are able to create well-structured clear prompts and iterate them to improve how accurate the AI is, which is particularly important in qualitative research. So prompt engineering skills are a huge one. And they also must be very good at creating an argument for justifying why and how they use AI for certain tasks.

It will remain essential for AI-assisted research to draw on the context in which research is conducted. So researchers must have strong skills to formulate final insights and recommendations based on such context—the community culture, the game design, and the situation in which a game is being played. Researchers must also have excellent documentation skills and really understand that every step of their AI-augmented journey has to be documented and logged, making it deployable repeatedly in the future. They need to understand how to create systems, protocols, and step-by-step instructions, which are key to making AI more useful. The better researchers are at breaking a task down into its subcomponents and contexts, the more useful AI will be for them.

Playing allows us to enjoy subjective and objective experiences that are impossible in real life, with systems of rules that allow us, once the game is over, whether we have won or lost, to take a step back and evaluate the experience: an elegant battle of wits in which the contenders have challenged each other to the best of their abilities. Giving players goals and the means to pursue them opens up a path, only apparently simple, to a complexity of values that qualifies the game as a fundamental and aesthetically meaningful moment in life. The game provides us with a series of agencies that we can temporarily take on and that are a sort of training ground for facing everyday challenges. In this way games can contribute to society; the use of game elements can help us make technology and cultural participation more engaging. With the increasingly widespread use of AI-based technologies, creative abundance is no longer the problem. The problem is what we put into it as human beings, what meanings—by choosing, creating, and guiding—we decide to sign with our name.

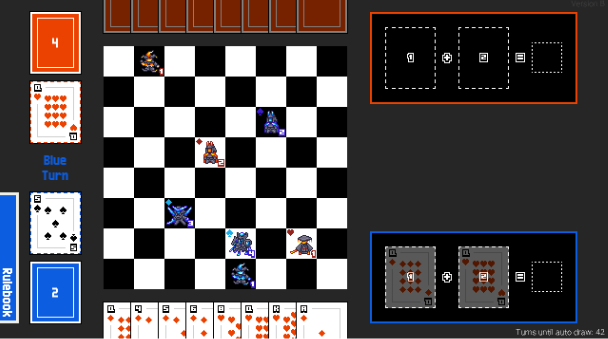

LEVI prototype game (2023) – with Simone Bassanelli visiting researcher at The Games Institute, University of Waterloo (Canada).

One Pulse: Treasure Hunter’s GAME CHI 2025 (Conference): Designing Biofeedback Board Games: The Impact of Heart Rate on Player Experience

Space Scavenger Squad GAME- CHI 2025 (Conference): From Solo to Social: Exploring the Dynamics of Player Cooperation in a Co-located Cooperative Exergame

Joker GAME- CHI 2025 (Conference): Support Autonomy: Exploring Player Perspectives on AI-Supported Onboarding in Video Games

Dr. Lennart E. Nacke

A pioneer in games, gamification, and user experience. As a University Research Chair and Professor of Human-Computer Interaction (HCI) in Games at University of Waterloo, he explores how user experience of video and exercise games can drive engagement and change behaviours, especially using AI. Over the past 15 years, he has published more than 200 academic papers and a best-selling book on Games User Research. A sought-after keynote speaker, Dr. Nacke has advised organizations worldwide on effective gamification strategies and AI use. He was recognized among the top 10 HCI scholars of the last decade and the top 2% of scientists worldwide. His groundbreaking work continues to shape how we understand and apply AI, UX, and games research. https://lennartnacke.com/

Joseph Tu

As an HCI researcher, he explores how physiological signals can enable accessible, adaptive, and immersive hybrid tabletop game experiences. His research integrates physiological computing, UX design, and accessibility to develop playful, personalized, and inclusive game experiences. Fun fact: he began his research journey through interactive kinetic sculptures that used sound and motion, an art practice that still shapes his approach to designing cozy, emotionally resonant play experiences.